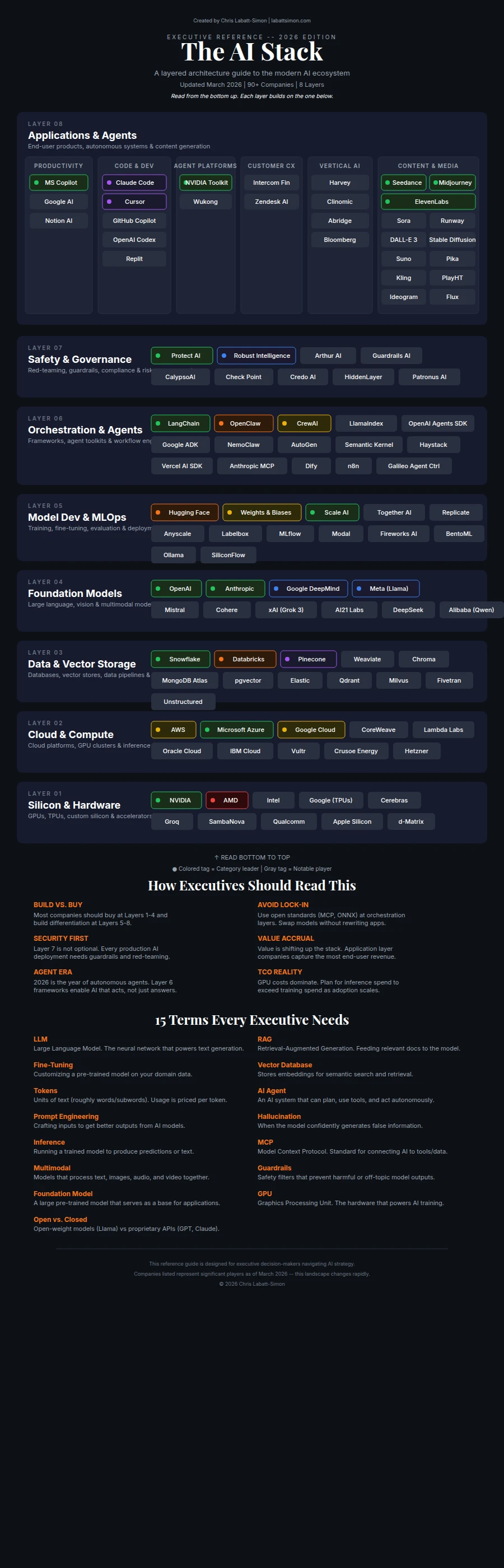

The AI Stack: A Map of Who Powers Enterprise AI in 2026

A colleague asked me to share with him how the AI stack works and who the leaders are. I ended up doing about 30 hours of research for him and I may as well share it with everyone. I plan to keep it updated quarterly, if not monthly. I basically looked at who consumed the biggest portion of the news cycle and who had super high public valuations. It does not mean the software is great. It just means people use it or they are doing something outside the box.

I broke AI down into eight layers. I will admit I had AI help me organize my work to make it clearer than the ramblings of notes I jotted down.

Here are what each of those layers are, why they matter, and who is competing in each one. I have included my take on how each layer affects mid-market companies specifically, because that is the audience I work with and the context I actually understand. Enterprise advice from someone who has never operated a $50M-$500M company is just noise.

March 2026 Update: What Changed

Since the original version of this article, a lot has shifted across every layer of the stack. Here is what is new or updated in this March 2026 revision:

NVIDIA announced the Vera Rubin architecture at GTC 2026 and completed its $20B acquisition of Groq

Anthropic released Claude 5 Preview on March 10, with full launch expected Q2 2026

OpenAI shipped GPT-5.4 on March 5 with a 272K token context window

Google began rolling out Gemini 3.x previews, with Gemini 2.5 deprecating in June

OpenAI launched the Agents SDK (replacing Swarm) with native MCP support

Google released the Agent Development Kit (ADK) built around the A2A protocol

NVIDIA introduced NemoClaw for long-running enterprise agent workflows

Galileo released Agent Control, an open source control plane for agent governance

DeepSeek V4 is imminent at 1 trillion parameters with a 1M token context window

The EU AI Act reaches full enforcement on August 2, 2026

xAI released Grok 3, competitive on reasoning benchmarks, plus Grok Imagine for image generation

Content and Media Generation category added to Layer 8, covering Seedance, Sora, Midjourney, ElevenLabs, and more

Claude Code added as leader in Code and Dev: #1 AI coding tool at 75% developer usage, 42% market share, 18.7M weekly active users

Cursor upgraded to leader in Code and Dev at 42% usage and fastest growth rate

GitHub Copilot moved from leader to notable in Code and Dev after dropping to 35% usage

OpenAI Codex added as notable player in Code and Dev at 26% usage with explosive growth

CrewAI moved from leader to notable player in Agent Frameworks (prototyping tool, not production-grade)

Claude Agent SDK added as notable player in Agent Frameworks (tool-use loops, sandboxed execution, safety focus)

NemoClaw updated to reflect its role within the broader NVIDIA Agent Toolkit

CalypsoAI updated to F5 AI Guardrails following acquisition by F5

Robust Intelligence removed as standalone (acquired by Protect AI in October 2023)

Added note on AI security consolidation: cybersecurity incumbents acquiring AI-native startups

Galileo Agent Control added as notable player in Safety and Governance (open source, Apache 2.0)

Layer 1: Silicon and Hardware

This is the physical foundation. AI training and inference need massive parallel processing power that regular CPUs cannot deliver. So the industry built purpose-built AI accelerators from the ground up. The edge AI market alone hit $4.44 billion in 2025, and it is growing fast as more companies push inference closer to the user.

Why this layer matters for mid-market companies

You probably will not buy AI chips directly. But you need to understand this layer because it determines the cost, speed, and availability of everything built on top of it. When NVIDIA has a supply shortage, your cloud AI costs go up. When new chip architectures hit the market, new pricing options open up for you.

The players

NVIDIA continues to dominate with roughly 80-90% market share in AI training chips. Their H100 and B100/B200 GPUs remain the standard. But the big news from GTC 2026 was the Vera Rubin platform, their next-generation architecture that combines new silicon with a redesigned interconnect for multi-chip scaling. The CUDA software ecosystem still locks everyone in. And NVIDIA made a $20 billion move by acquiring Groq, which now operates as an NVIDIA subsidiary. The Groq 3 LPU (Language Processing Unit) gives NVIDIA a dedicated inference play with speeds that are still 10-20x faster than traditional GPU setups for certain model architectures. That acquisition tells you where NVIDIA thinks the money is shifting: from training to inference.

AMD is rolling out their Helios architecture to compete with both Blackwell and Vera Rubin. Their MI300X chip has been gaining traction with cloud providers looking to reduce NVIDIA dependency. Microsoft Azure and Meta have both publicly deployed AMD chips for AI workloads. The price-performance ratio is competitive, but the software ecosystem (ROCm) is still catching up to CUDA. Helios is AMD's bet that they can close that gap.

Google builds custom TPUs (Tensor Processing Units) that power their own AI services. TPU v5p is available through Google Cloud and is genuinely competitive for large-scale training and inference, particularly for transformer models. Google keeps these mostly captive for their own Gemini workloads, but third-party access is improving.

Intel has had a rough stretch. Their 18A process node is delayed again, and their Gaudi 3 accelerator exists but adoption has been limited compared to NVIDIA and AMD. Intel is still trying to find its footing in AI silicon, and the delays are not helping.

Apple deserves mention because their M-series chips (M4 Ultra with up to 192GB unified memory) have become surprisingly viable for local AI inference. A Mac Studio can run large models locally. That matters for mid-market companies that want to keep data on-premises without investing in enterprise GPU servers.

Edge AI newcomers: Hailo is building dedicated AI processors for edge devices, cameras, and industrial equipment. Their chips run inference at low power with no cloud dependency. Qualcomm is pushing AI processing into mobile and IoT devices with their Snapdragon AI Engine. Both of these matter if you are thinking about AI at the edge rather than in the data center. The edge AI market is real and it is where a lot of practical deployment is happening outside the hype cycle.

Layer 2: Cloud and Compute Infrastructure

This layer provides the data centers and cloud platforms that give businesses on-demand access to AI computing power. Most organizations cannot afford to build their own AI infrastructure, so cloud providers rent out the GPU capacity needed to train and run models.

Why this layer matters

For mid-market companies, this is where most of your AI spending goes. The choice between cloud providers (or between cloud and on-premises) directly affects your costs, data sovereignty, and vendor lock-in risk. The big three are all growing fast, but at different rates and with different strategies.

The players

AWS offers AI compute through SageMaker, Bedrock (managed multi-model hosting), and direct EC2 GPU instances. Bedrock now supports a wide roster of models from Anthropic, Meta, Mistral, and others, making it a genuine multi-model platform. AWS is also pushing their own silicon with Trainium3 chips for cost-effective training. AWS AI revenue grew about 17% year over year. Their advantage remains ecosystem breadth. If you are already on AWS, adding AI workloads is the path of least resistance.

Microsoft Azure has the deepest partnership with OpenAI, giving Azure customers priority access to GPT-5.4 and other OpenAI models. Azure also introduced Provisioned Throughput Units (PTUs) for predictable pricing on high-volume AI workloads, which is a big deal for companies tired of unpredictable API bills. Azure AI revenue grew 39% year over year, the fastest of the big three, driven largely by the OpenAI integration. For companies already using Microsoft 365, the integration story is hard to beat.

Google Cloud Platform (GCP) offers Vertex AI with native Gemini model integration plus TPU v5p compute. GCP AI revenue grew over 30% year over year. Their advantage is pricing and the fact that Gemini models run natively on their infrastructure with no middleman. GCP is often 20-30% cheaper than AWS for equivalent GPU compute, particularly for sustained workloads.

DigitalOcean entered the AI compute space and is worth watching for startups and smaller mid-market companies. They will not compete with AWS on scale, but their simplicity and pricing make them attractive for teams that do not need enterprise-grade everything.

Smaller players: Lambda Labs (GPU cloud focused exclusively on AI), CoreWeave (backed by NVIDIA, built specifically for AI workloads), and Hetzner (European provider with excellent price-performance for inference workloads, where I personally run several AI deployments).

The on-premises option

Cloud is not the only answer. For companies processing sensitive data (healthcare, financial services, legal), running AI locally eliminates the data sovereignty question entirely. A single NVIDIA A100 server ($15,000-30,000) can handle most mid-market AI workloads. The math changes when your monthly cloud AI spend exceeds $2,000-3,000. At that point, owning hardware often makes more financial sense.

Layer 3: Data Infrastructure

AI models are only as good as the data they can access, and this layer handles storing, organizing, and retrieving that data. The big story in 2026 is convergence: the major data platforms are absorbing vector database functionality, AI model serving, and agent tooling into their core products.

Why this layer matters

This is where most AI projects fail. Not because the models are bad, but because the data pipeline is broken. If your AI cannot find the right information in your internal systems, it will hallucinate or give generic answers that add no value.

The converging platforms

Databricks has gone all-in on AI integration. Unity Catalog provides unified data governance across your entire lakehouse. Mosaic AI Agent Bricks lets you build and deploy AI agents directly within Databricks. And they now have native Vector Search built into the platform, so you do not need a separate vector database for RAG workloads. If you are already on Databricks for analytics, adding AI feels natural.

Snowflake launched Cortex AI with multimodal capabilities and their own Arctic LLMs that run natively inside Snowflake. Like Databricks, Snowflake now has a native vector database built in. The pitch is simple: your data never leaves Snowflake, and AI runs where the data already lives. For regulated industries, that matters a lot.

Both platforms having native vector databases is a big deal. It means you can do semantic search, RAG, and embedding-based retrieval without spinning up and managing a separate system. For mid-market companies, this simplifies the architecture significantly.

Standalone vector databases

Standalone vector databases still make sense for specialized workloads or if you are not already on Databricks or Snowflake.

Pinecone is the most popular managed vector database. Easy to set up, scales well, and has strong integration with most AI frameworks. The trade-off is that your data lives on their servers.

Qdrant is my default recommendation for mid-market deployments that need a standalone solution. Open source, runs on a single server with 32GB RAM, handles 50,000+ documents with sub-50ms search latency. Your data stays on your infrastructure.

Milvus is the choice for larger deployments that need distributed scaling. More operational complexity, but it handles millions of documents across multiple servers.

Weaviate offers both managed and self-hosted options with strong hybrid search (combining vector and keyword search).

Chroma is lightweight and popular for prototyping and smaller deployments. It runs embedded in your application, which simplifies architecture but limits scalability.

Traditional data infrastructure

Your AI also needs access to structured data in PostgreSQL, MySQL, or whatever your operational databases are. And ETL/ELT tools (Fivetran, Airbyte) remain critical for keeping your AI's knowledge base current with fresh data from your operational systems.

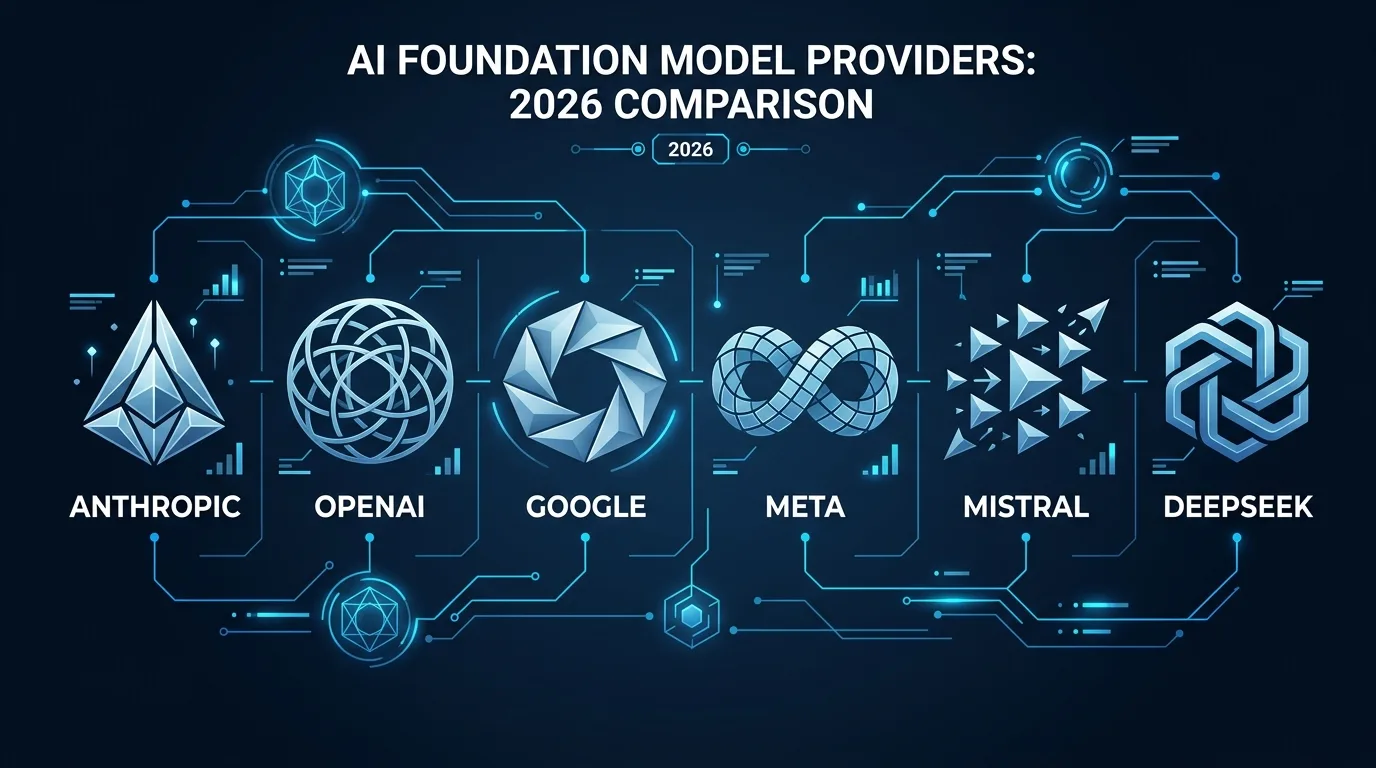

Layer 4: Foundation Models

These are the large-scale AI models that understand and generate language, code, and images. They are the base intelligence that everything else in the stack builds on. March 2026 has been one of the busiest months for model releases I have ever seen.

Why this layer matters

The choice of foundation model affects the quality, cost, and speed of every AI feature you build. And the landscape changes quarterly. A model that was state-of-the-art six months ago might now be outperformed by something 10x cheaper.

The frontier models

Anthropic (Claude) remains my primary recommendation for enterprise deployments. Claude 4 launched in January 2026 with Opus, Sonnet, and Haiku tiers. Claude Opus 4 leads on complex reasoning and code generation. Claude Sonnet 4 is the best balance of capability and cost. Then on March 10, Anthropic dropped Claude 5 Preview, with the full launch expected in Q2 2026. If the preview is any indication, the jump from Claude 4 to 5 is significant. Their safety research still goes deeper than any other lab, which matters when your compliance team starts asking questions.

OpenAI (GPT) released GPT-5.4 on March 5, 2026 with a 272K token context window. Pricing landed at $10 per million input tokens and $30 per million output tokens. It is a strong model but not the runaway leader it used to be. The ecosystem of tools built around OpenAI's API is still the largest, and their new Agents SDK (which replaced the experimental Swarm framework) ships with native MCP support.

Google (Gemini) is in transition. Gemini 2.5 is being deprecated in June 2026, and Gemini 3.x previews are already available. The 3.x line shows major improvements in reasoning and multimodal capabilities (text, image, video, audio in one model). Google's pricing remains aggressive, and Gemini Flash continues to be one of the best cost-performance options for high-volume workloads.

xAI (Grok) entered the picture with Grok 3, which is competitive on reasoning benchmarks, and Grok Imagine for image generation. Having another well-funded lab in the mix keeps pricing pressure on everyone else.

The open source frontier

Open source models are where cost-conscious companies should pay attention.

Meta's Llama 3 (up to 405B parameters) remains the most capable open source model family. No Llama 4 yet as of March 2026. The 70B version runs on a Mac Studio and performs competitively with commercial models for most business tasks.

DeepSeek has DeepSeek V4 imminent, reportedly 1 trillion parameters with a 1 million token context window. Their V3 models already match or exceed GPT-4 on code generation and mathematical reasoning at a fraction of the cost. V4 could reshape the cost equation for companies willing to use Chinese-developed models.

Mistral from France continues with strong performance in smaller model sizes. Their Mixtral architecture (mixture of experts) provides good efficiency. No major new releases yet in 2026, but they remain solid for European companies with data sovereignty requirements.

Alibaba's Qwen series keeps pushing small-model performance. Models that run entirely on a phone or laptop with no cloud dependency change the economics of edge AI deployment.

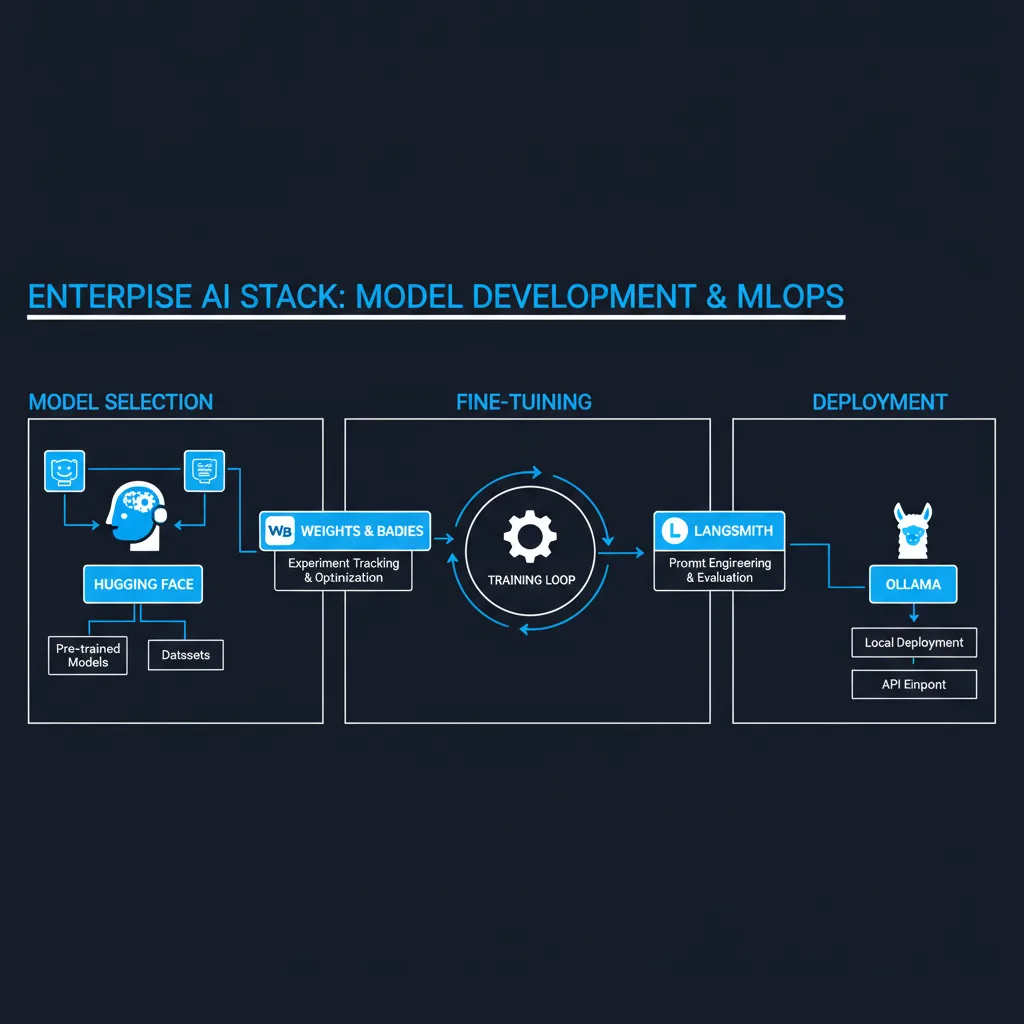

Layer 5: Model Development and MLOps

This layer provides the tools developers use to find, fine-tune, evaluate, and deploy AI models. This is where raw foundation models get customized and made production-ready for your specific business needs.

Why this layer matters

Most mid-market companies will not train models from scratch. But you may need to fine-tune models on your specific data, evaluate which model works best for your use case, and deploy models reliably in production. This layer provides those tools.

The players

Hugging Face is the dominant hub for AI models and the GitHub of AI. Over 500,000 models available for download, plus tools for fine-tuning, evaluation, and deployment. If you are doing anything with open source AI, you will interact with Hugging Face.

AWS SageMaker provides end-to-end ML model development, training, and deployment within the AWS ecosystem. It has gotten better at LLM fine-tuning and now integrates with Bedrock for managed model serving.

Databricks Mosaic AI (formerly MosaicML) handles training and fine-tuning within the Databricks platform. If you are already on Databricks for data, adding model development in the same environment simplifies operations.

MLflow is the open source standard for experiment tracking, model versioning, and deployment pipelines. Works with most cloud platforms and training frameworks. Databricks maintains it but it runs anywhere.

Kubeflow handles ML pipelines on Kubernetes. More operational overhead than managed options, but gives you full control over your infrastructure.

Weights & Biases provides experiment tracking and model evaluation. When you are testing different models or fine-tuning approaches, W&B helps you track what works and why.

Dataiku offers a visual platform for building and deploying ML models that is accessible to teams without deep ML engineering experience. Good for mid-market companies that want ML capabilities without hiring a full ML team.

Neptune.ai focuses on experiment tracking and model metadata management. Smaller than W&B but well-regarded for its clean interface and flexibility.

SiliconFlow is worth watching as an inference optimization platform. They focus on making model serving cheaper and faster, which matters once you are running models in production at scale.

Ollama has become the standard for running models locally on developer machines. Simple installation, supports most popular open source models, and makes local inference accessible to non-specialists.

Layer 6: Orchestration and Agent Frameworks

This layer provides the software that lets AI agents plan multi-step tasks, use external tools, and take actions autonomously. It is what turns a basic AI model from something that just answers questions into something that can actually do work across multiple systems. Two big new entrants landed in early 2026.

Why this layer matters

This is the layer that delivers the most business value for mid-market companies. Single-turn chat interactions are nice, but they do not transform operations. Agents that can plan a sequence of actions, execute them across your tools (Jira, Salesforce, GitHub, Slack), and validate their own work are what drive real ROI.

The players

OpenClaw remains the breakout platform. Open source, runs locally, supports multi-agent orchestration. NVIDIA's Jensen Huang called it "the most important software release probably ever." It is what I run my own agent fleet on. See how a fractional CAIO builds an enterprise AI stack for a practical implementation guide.

LangChain/LangGraph is the most production-ready orchestration framework as of early 2026. LangGraph handles complex stateful agent workflows with checkpointing, branching, and human-in-the-loop patterns. The ecosystem is massive and battle-tested.

OpenAI Agents SDK is new. It replaced the experimental Swarm framework and ships with native MCP (Model Context Protocol) support. This matters because MCP is becoming the standard way for agents to connect to external tools and data sources. OpenAI building it in natively signals where the industry is heading.

Google Agent Development Kit (ADK) is also new, built around the A2A (Agent-to-Agent) protocol for cross-framework agent communication. The idea is that agents built in different frameworks can talk to each other through a standard protocol. Whether A2A gets adoption outside Google's ecosystem remains to be seen, but the concept is right.

CrewAI is a notable player that focuses on multi-agent collaboration. You define agents with specific roles and they work together on complex tasks. It is best suited as a prototyping tool rather than a production-grade solution. Be aware it can use roughly 3x the tokens of equivalent LangGraph implementations. Good for rapid proof-of-concept work, but teams running production workloads should look at LangGraph, OpenClaw, or the OpenAI Agents SDK instead.

AutoGen/AG2 (Microsoft) provides async multi-agent conversations. Agents can be assigned different roles and collaborate to solve problems through structured dialogue. The async architecture makes it well-suited for long-running workflows.

NemoClaw is part of the broader NVIDIA Agent Toolkit, their enterprise agent platform announced at GTC 2026. Built for long-running autonomous tasks. Plans, executes, self-checks, and recovers from errors over multi-hour workflows. Runs on any chip despite being built by NVIDIA. NemoClaw works alongside other components in the NVIDIA Agent Toolkit to provide a full agent stack from chip to orchestration.

Claude Agent SDK (Anthropic) is a notable player worth watching. It provides tool-use loops with sandboxed execution and a strong safety focus, consistent with Anthropic's overall approach to responsible AI development. Good for teams that want agent capabilities built on Claude models with built-in safety constraints.

Galileo Agent Control (released March 2026) provides runtime governance for agents at scale. See Layer 7 for full details on its open source control plane and enterprise partnerships.

Layer 7: AI Safety and Governance

Every AI deployment creates new security risks (prompt injection, data poisoning, model theft), and this layer builds the defenses. 2026 is the year AI regulation gets real teeth. Multiple jurisdictions are moving from guidelines to enforcement.

Why this layer matters

If you deploy AI without safety and governance, you are exposing your company to data leaks, compliance violations, and reputational risk. 68% of mid-market employees admit to using non-sanctioned AI tools for work. That is your data walking out the door through ChatGPT and Claude personal accounts. And now regulators are watching.

Regulatory landscape

The EU AI Act reaches full enforcement on August 2, 2026, though the Digital Omnibus package may push some provisions to November. If you sell to European customers or process European data with AI, you need to be ready. High-risk AI systems face mandatory conformity assessments, transparency requirements, and human oversight obligations.

China's CAICT (China Academy of Information and Communications Technology) is running AI safety assessments that affect any company operating in or selling to China. Their approach is different from the EU's but equally consequential.

Italy passed criminal penalties for AI-generated deepfakes, one of the first countries to attach jail time to synthetic media misuse. Expect other jurisdictions to follow.

The players

Anthropic continues to lead on self-imposed safety standards. Their ASL-3 (AI Safety Level 3) framework sets internal commitments for how they develop and deploy more capable models. Whether you use Claude or not, Anthropic's safety research is worth following because it influences the entire industry.

Alice (formerly ActiveFence) provides AI guardrails and content safety infrastructure. They help companies filter harmful outputs, enforce content policies, and maintain compliance across AI deployments.

Protect AI focuses on ML supply chain security. They scan models for vulnerabilities before you deploy them. Think of it as security scanning for AI models.

F5 AI Guardrails (formerly CalypsoAI, acquired by F5) provides real-time monitoring and filtering for AI interactions. They sit between your users and the AI model, scanning for data leaks, prompt injection attacks, and policy violations. The F5 acquisition is part of a broader trend: cybersecurity incumbents like F5, Check Point, SentinelOne, and Zscaler are acquiring AI-native security startups, consolidating the AI security market under established enterprise brands.

Arthur AI offers model monitoring and bias detection. They track your AI's outputs over time and alert you when quality degrades or biased patterns emerge.

Guardrails AI provides an open source framework for adding validation and safety checks to LLM outputs. You define rules and the framework enforces them before any output reaches users.

Galileo Agent Control is a notable player in the safety space as well. Released as open source under an Apache 2.0 license, it provides a centralized control plane for governing AI agents at runtime. It enforces policies, monitors agent behavior, and provides visibility into what your agents are doing. AWS, CrewAI, and Glean are among the first integration partners. For companies deploying multiple agents across different frameworks, having a unified governance layer is essential.

Internal governance

Beyond third-party tools, every company deploying AI needs internal governance: an AI usage policy, data classification rules, model approval workflows, and regular audits. The fractional CAIO role exists partly because someone needs to own this function and most mid-market companies do not have anyone qualified to build and enforce an AI governance framework from scratch. Our 90-day fractional CAIO roadmap outlines how to stand up that function. With the EU AI Act enforcement date approaching, this is no longer optional.

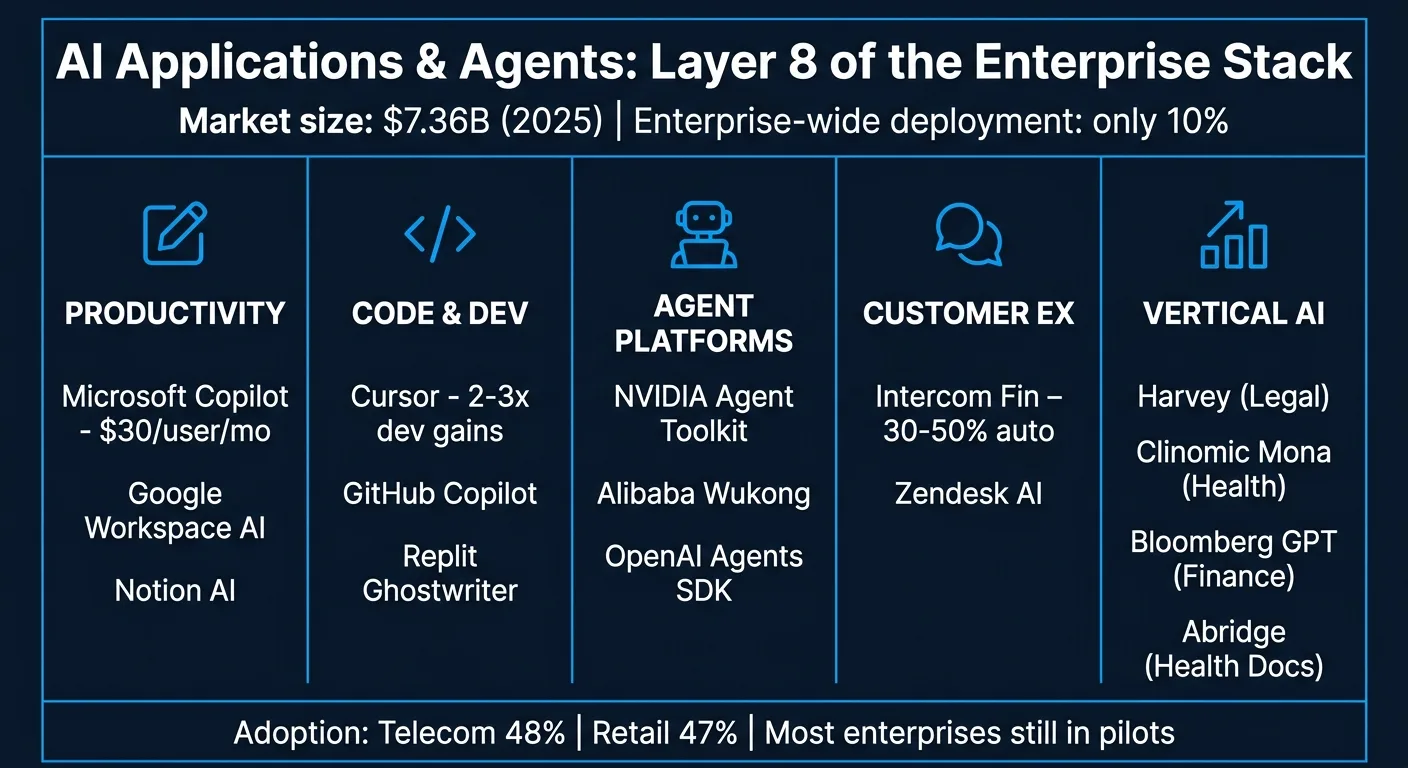

Layer 8: Applications and AI Agents

This is the layer most people actually interact with. The AI-powered tools embedded in the software they already use. The agentic AI market hit $7.36 billion in 2025 and it is accelerating. But the adoption numbers tell an interesting story: telecom leads at 48% adoption, retail follows at 47%, and only 10% of enterprises have deployed AI company-wide. Most are still running pilots and departmental experiments.

Why this layer matters

All the infrastructure, models, and orchestration below exist to power applications that solve real business problems. For mid-market companies, the question is: which applications deliver the most ROI with the least implementation risk?

Productivity and workplace

Microsoft Copilot is embedded across the entire Microsoft 365 suite. It writes emails, summarizes meetings, generates presentations, and queries your organizational data. The advantage is zero integration work if you are already on Microsoft. The disadvantage is cost ($30/user/month adds up fast) and limited customization.

Google Workspace AI provides similar capabilities across Google's suite. Docs, Sheets, Slides, and Gmail all have AI assistants. Competitive with Copilot, and often cheaper for organizations already on Google Workspace.

Notion AI has become popular for smaller teams. AI-powered writing, summarization, and knowledge management within Notion's workspace. Less enterprise-grade than Copilot but more accessible.

Code and development

Claude Code (Anthropic) is the #1 AI coding tool in 2026 with 75% developer usage, 42% market share, and 18.7 million weekly active users. It operates as an agentic coding assistant that lives in your terminal, understands your entire codebase, and can plan and execute multi-file changes autonomously. Developers report it handles complex refactors, bug investigations, and feature implementations that other tools struggle with. The combination of Claude's reasoning capabilities and the agentic execution loop makes it the current leader.

Cursor is the other leader in this space, an AI-native code editor at 42% usage and the fastest growth rate in the category. It understands your entire codebase and can generate, refactor, and debug code in context. Many developers report 2-3x productivity gains. The tight editor integration and multi-file awareness make it the preferred choice for developers who want AI built directly into their editing environment.

GitHub Copilot was the original AI coding assistant but has dropped to 35% usage and is now a notable player rather than the leader it once was. Still has a large install base and is good for autocomplete and simple code generation. The tight GitHub integration keeps it relevant for teams heavily invested in the GitHub ecosystem, but both Claude Code and Cursor have surpassed it for complex multi-file changes and agentic workflows.

OpenAI Codex is a notable player at 26% usage with explosive growth. It brings OpenAI's models into a cloud-based coding agent that can run code, install packages, and execute multi-step development tasks. Worth watching as OpenAI continues to invest in developer tooling.

Replit offers AI-powered development environments that lower the barrier to building software. Their Ghostwriter feature generates code from natural language descriptions.

Agent platforms and toolkits

NVIDIA Agent Toolkit provides pre-built components for deploying AI agents in enterprise environments. Combined with NemoClaw, NVIDIA is building a full agent stack from chip to application.

Alibaba Wukong is an agent platform out of Alibaba Cloud that handles multi-agent orchestration for e-commerce, logistics, and customer service workflows. If you are operating in APAC markets, it is worth evaluating.

Customer experience

Intercom Fin is one of the better AI customer support implementations. It uses your help docs and past conversations to resolve customer queries without human intervention. Companies report 30-50% of support tickets handled automatically.

Zendesk AI provides similar capabilities within the Zendesk ecosystem. Automated ticket routing, suggested responses, and knowledge base generation.

Vertical applications

Clinomic Mona is worth calling out in healthcare. It provides AI-assisted clinical decision support at the point of care. Healthcare AI is a space where getting it right has life-or-death consequences, and Clinomic is one of the companies taking that seriously.

Harvey (legal AI), Abridge (healthcare documentation), Bloomberg GPT (financial analysis), and dozens of other vertical-specific AI applications continue to grow. For mid-market companies in regulated industries, these purpose-built tools often outperform general-purpose AI because they understand industry-specific terminology, compliance requirements, and workflows.

Content and media generation

This category barely existed two years ago. Now you can generate broadcast-quality video, photorealistic images, and human-sounding voice audio from a text prompt for less than the cost of a stock photo license. The tools are good enough that the question is no longer "can AI do this?" but "should we still be paying agencies $5,000 per video?"

The China AI story extends here too. ByteDance and Kuaishou are shipping video generation models that match or beat their American competitors, and they are doing it at lower price points.

Video generation

Seedance (ByteDance) is arguably the strongest video generation model available in early 2026. Version 1.0 generates 1080p and 2K video from text or images, with clips running 2 to 12 seconds. Version 2.0, released in early 2026, added multimodal inputs (text, image, audio, and video combined) plus audio-video joint generation. The output quality is noticeably better than most competitors on motion smoothness and character consistency. Advertising agencies and game studios have adopted it for prototyping and pre-visualization. It fits the broader Chinese AI pattern alongside DeepSeek and Qwen: rapid iteration, massive training data, and aggressive pricing.

NOTE: OpenAI has now discontinued SORA. Sora 2 (OpenAI) generates cinematic video with synchronized audio. API pricing runs $0.10 to $0.50 per second depending on resolution, so a 10-second clip costs $1 to $5. ChatGPT Plus ($20/month) includes about 50 videos, Pro ($200/month) about 500. The audio sync quality leads the field. If your team is already on the OpenAI stack, the integration is straightforward.

Runway (Gen-4 and Gen-4.5) has the most complete creative toolkit. Text-to-video, image-to-video, motion brush, inpainting, lip sync, and a unique performance capture feature called Act Two. Subscriptions run from free (watermarked, limited credits) to $76 to $95 per month for unlimited use. Film and TV post-production teams favor Runway because it integrates with existing creative workflows.

Pika is the budget-friendly option at $8 to $76 per month depending on tier. The creative manipulation tools (Pikatwists for complex motion, Pikaswaps for object replacement) are distinctive. Best suited for social media teams producing high volumes of short-form content.

Kling (Kuaishou) offers strong multi-angle subject consistency at competitive pricing. Plans range from free to $180 per month, with API access at $0.08 to $0.17 per second. Like Seedance, it is part of the Chinese video generation wave. Good for character-driven short-form content.

Image generation

Midjourney remains the gold standard for visual quality. Plans from $10 to $120 per month, with unlimited generation on Standard tier and above using Relax mode. The output aesthetic is still the best in the field for creative and artistic work. No free trial.

DALL-E 3 and GPT Image (OpenAI) integrate natively into ChatGPT and the OpenAI API. The newer GPT Image models start at $0.005 per image, which makes them extremely cost-effective at scale. Prompt adherence and text rendering are strong. If your team already uses ChatGPT, this is the lowest-friction option.

Stable Diffusion 3 (Stability AI) is the open source option. Runs locally on consumer GPUs with no data sent to external servers. Free for self-hosted use, or $0.003 to $0.08 per image through API providers. The community ecosystem of fine-tuned models is massive. This is the right choice for companies with on-premises requirements or data sensitivity concerns in healthcare, legal, or defense.

Ideogram has the best text rendering in generated images, period. If you need readable text in logos, banners, or signage, Ideogram is the tool. Pricing starts at free (20 prompts per day) up to about $42 per month for Pro. API access at $0.03 per image.

Flux (Black Forest Labs, founded by ex-Stability AI team) delivers the best photorealism in the open-weights space. Official API pricing is about $0.03 per megapixel. Open weights mean you can deploy it on your own infrastructure. Strong for product visualization and architectural rendering.

Audio and voice generation

ElevenLabs is the industry leader in voice quality. 32 languages, over 10,000 voices, and voice cloning that sounds disturbingly real. Plans range from free (10,000 credits per month) to $1,320 per month for enterprise. They recently cut conversational AI pricing by 50%, which matters if you are building voice-based customer service bots. Latency is as low as 250 to 300 milliseconds for real-time applications.

PlayHT competes with ElevenLabs on language coverage (800+ voices in 140+ languages) and offers an unlimited plan at $29 to $99 per month. The API-first approach makes it a good fit for integration into existing products.

Suno handles music generation. Describe what you want in text and get a complete, radio-quality track in minutes. Pro is $10 per month for about 250 songs, Premier is $30 per month for about 1,000. Commercial use is allowed on paid plans. Marketing teams use it for background music, podcast intros, and video soundtracks. At these prices, it undercuts traditional stock music libraries by an order of magnitude.

For mid-market companies, the practical takeaway is that content production costs just dropped by 80% or more. A marketing team of three can now produce video, image, and audio content that would have required a production studio 18 months ago. The tools are not perfect, and you still need humans directing the output and catching quality issues. But the economics have fundamentally changed.

The vertical application space is where I expect the most growth over the next 12-18 months. General-purpose AI tools are becoming commoditized. The real value is in applications that deeply understand a specific industry's data, regulations, and workflows. A legal AI that knows case law precedent is worth more than a general chatbot that can kind of summarize a contract.

For mid-market companies evaluating AI investments, start at Layer 8. Find the application that solves your most painful problem. Then work backward through the stack to understand what infrastructure you need to support it. For guidance on building an AI strategy that starts with business value, see our guide to generative AI consulting services. Too many companies start at Layer 1 (buying hardware) or Layer 4 (picking a model) without knowing what they are building toward. That is how you end up with expensive infrastructure and no business value.

This is a living document

The AI landscape changes fast. Companies that were not on anyone's radar six months ago can become category leaders overnight. DeepSeek is a good example. So is Cursor. And the Groq acquisition by NVIDIA shows that even companies doing genuinely novel work can get absorbed by the incumbents.

I built this chart to help a colleague, but hopefully other executives and decision-makers can use it to see the full picture of how AI fits together. I know I did not get everything right. If you think a company is missing, miscategorized, or does not belong, I want to hear from you.

Reach out to me at chris@labattsimon.com, visit labattsimon.com, or connect with me on LinkedIn. I will keep updating this as things evolve.

Last updated: March 2026

Frequently Asked Questions

The 8 layers are: Silicon and Hardware, Cloud and Compute Infrastructure, Data Infrastructure, Foundation Models, Model Development and MLOps, Orchestration and Agent Frameworks, AI Safety and Governance, and Applications and AI Agents. Each layer builds on the ones below it.

It depends on your use case. Anthropic Claude leads for complex reasoning and enterprise safety. OpenAI GPT has the widest ecosystem. Google Gemini offers the best price-performance ratio. Meta Llama 3 is the strongest open source option for companies that want to keep data on-premises.

Not necessarily. Most start with cloud-based AI compute. However, when monthly cloud AI spending exceeds $2,000-3,000, owning hardware often makes more financial sense. A Mac Studio with an M4 Ultra chip can run capable open source models locally for a one-time cost of $7,000.

Layer 8 (Applications and AI Agents) delivers the most direct business value. Start there by identifying the application that solves your most painful problem, then work backward through the stack to determine what infrastructure you need to support it.

Agent orchestration platforms like OpenClaw and NemoClaw let AI agents plan multi-step tasks, use external tools (Jira, Salesforce, GitHub), and take actions autonomously. They turn AI from something that answers questions into something that actually does work across your business systems.