In Star Trek, nobody really works. Not in the way we understand it. The ship's computer and automated systems handle most of the labor. People spend their time exploring new worlds, running experiments, learning, debating philosophy over synthesized tea. It's a future where humans are free to do the things that actually make them human. For decades that felt like pure science fiction. But AI agents are now writing code, managing finances, drafting documents, and running operations around the clock without rest. The gap between that fictional universe and our real one is shrinking faster than most people realize. So the question worth sitting with is a simple one. Is that actually the world we're building toward?

Your Next Employee Never Sleeps

Tony Wu left XAI in early 2026 with a single conviction. "In an era with full possibilities," he wrote, "a small team armed with AI can move mountains and redefine what's possible." He wasn't being poetic. He was describing a business model.

Something fundamental is changing about how work gets done. Not in a vague, futuristic way. Right now. AI agents don't clock out. They don't need weekends. They don't send Slack messages saying they're "heads down" while scrolling Twitter. And for the first time, a single person can run five, ten, or twenty of them in parallel, each working on a different project, each making progress while the person managing them eats dinner or sleeps.

This isn't about replacing workers. It's about what happens when one worker can do the work of an entire department. And the honest answer is that nobody fully knows yet. But the early signals are loud enough to pay attention to.

The $100-a-Month Team

The new digital workforce operates around the clock, processing and executing without pause.

Jason Wang, the CEO of financial platform Altruist, said something in early 2026 that sent shockwaves through Wall Street. "This architecture we're using to build Hazel, it can replace any job in wealth management. Usually these jobs are done by entire teams and they'll be done with AI effectively for $100 a month."

That single statement helped drive Charles Schwab, Raymond James, and LPL Financial stocks down more than 7% each in a single day. Not because Altruist was some untested startup with a pitch deck. They already serve roughly a third of all independent financial advisors in the US.

Wang's comment points to a pattern that's repeating across industries. The cost of getting work done is collapsing. And when the cost of labor drops that dramatically, the entire structure around managing that labor has to change too.

The Shift Nobody Trained For

Managing people and managing AI agents are fundamentally different skills. A good people manager reads body language, navigates office politics, coaches through personal struggles, and builds trust over months or years. None of that applies to agents.

Managing agents is closer to being an air traffic controller. You're monitoring multiple processes at once, deciding which ones need human intervention, catching errors before they compound, and constantly reprioritizing. The emotional intelligence that made someone a great team lead in 2023 matters less than the ability to think in systems and spot patterns across parallel workstreams.

A November 2025 report from McKinsey Global Institute found that current technologies could automate about 57% of US work hours, with AI agents alone capable of handling tasks that occupy 44% of those hours. But the report also emphasized something often overlooked. The bottleneck isn't the technology. It's the management layer. McKinsey found that maximum value comes when AI agents act as "virtual coworkers" embedded in redesigned workflows, fundamentally shifting the human role from executor to orchestrator. Companies that can't figure out how to orchestrate AI alongside human workers (a core capability of a fractional Chief AI Officer) will fall behind companies that can.

Ethan Mollick, a professor at Wharton who has been studying AI's impact on work more closely than almost anyone, put it bluntly in his research. When his students used AI agents to complete consulting-style tasks, the ones who treated the AI like a junior employee and micromanaged every output performed worse than those who learned to set goals, check results, and stay out of the way. The best "managers" of AI were the ones who gave clear instructions, then let the system run.

One Person, Five Projects

Here's where things get strange. Traditional management assumes a roughly 1-to-1 relationship between a person and a project. Maybe you juggle two or three things, but there's a ceiling. Your attention is finite. Your hours are finite.

AI agents break that ceiling.

Robots and Pencils, a fast-growing AI engineering firm, has restructured around what they call "velocity pods." Small teams that use agentic platforms to deliver outcomes in weeks instead of months. They explicitly don't build big teams. They build small ones and multiply their output with AI.

Blitzy, a company building agentic software development tools, claims their enterprise clients use thousands of specialized agents to ingest millions of lines of code in a single pass. Their pitch is that over 80% of sprint work gets done autonomously. Whether that number holds up across every use case is debatable. But the direction is clear. The ratio of humans to output is shifting fast.

Imagine a product manager in 2027 who wakes up, checks the overnight output of three AI agents (one did market research, one drafted a product spec, one ran competitive analysis), gives feedback to each in about 20 minutes, then kicks off two more agents to handle the next phase while she moves on to a completely different project. By lunch, she's made meaningful progress on five initiatives. Not surface-level progress. Real work.

That's not science fiction. People are doing crude versions of this today with tools like Claude, Cursor, and various agentic coding frameworks. The infrastructure is just going to get better.

What Happens to the Middle?

Autonomous systems running in parallel multiply output beyond what any single worker could achieve.

This brings up an uncomfortable hypothesis. If one person plus AI agents can do the work of a team, what happens to middle management?

A Gartner prediction that's now playing out in real time flagged middle management as one of the roles most exposed to AI displacement. The research firm projected that by 2026, 20% of organizations would use AI to flatten their structures, eliminating more than half of current middle management positions. In early 2026, we're seeing exactly that. Companies like ASML are cutting layers of management to "become less bureaucratic," and Amazon's CEO Andy Jassy has said plainly that as AI agents roll out, "we will need fewer people doing some of the jobs that are being done today." January 2026 alone saw over 108,000 job cuts announced in the US, the highest January total since 2009.

But there's a counter-argument worth considering. Maybe middle management doesn't disappear. Maybe it transforms into something more like "agent operations." Someone has to decide which agents to deploy, how to evaluate their output, when to override them, and how to integrate their work into a larger strategy. This is exactly the role a fractional CAIO fills for mid-market companies. That's still management. It just looks very different.

Will Ryan, the CEO of Granite Shares Advisor, captured the current mood perfectly. "I have no idea what's next. The story from last year was we all believe in AI, but we're searching for the use case. And when we keep discovering the use cases that are seemingly more and more powerful and more and more compelling, it's now leading to disruption."

The Always-On Problem

There's a darker side to agents that never sleep. If your AI workforce runs 24/7, the temptation to also be "on" 24/7 becomes enormous.

We already went through this once with smartphones and email. The boundary between work and life dissolved, and it took years of cultural pushback to even partially restore it. AI agents could trigger the same cycle, but worse. Because it's not just that your boss can email you at midnight. It's that your agents produced 40 pages of output overnight, and if you don't review it by morning, you're already behind.

January 2026 data from Gallup found that only 31% of US employees are engaged at work, down from a 36% peak in 2020. That five-point decline represents roughly 8 million fewer engaged workers. Younger workers have been hit hardest, with Gen Z and younger millennials seeing an eight-point drop. Adding always-on AI agents to that mix could go either way. It could free people from drudge work and give them more creative, fulfilling tasks. Or it could create a new kind of anxiety where you're never really done because the machines never are.

The companies that get this right will probably be the ones that set explicit norms. Agents run overnight, but humans review output at scheduled times. Just because the work can happen at 3am doesn't mean you need to be there when it does.

The Small Team Hypothesis

Here's a hypothesis worth watching. By 2028, the most successful new companies will have fewer than 20 employees but output that rivals firms with hundreds.

We're already seeing the early version of this. Sam Altman has talked about the "one-person billion-dollar company" concept. It sounded like hype when he first said it. It sounds less like hype now.

Altruist has about 5,700 customers and is the third-largest platform in its industry. They didn't get there by building a massive sales team. They got there by building AI tools that made their small team wildly productive.

The XAI exodus is another data point. Elon Musk responded to the wave of departures by saying "We are accelerating faster than any other AI organization on earth despite being a much smaller team." Whether that's true or just Musk being Musk, the underlying belief is telling. He genuinely thinks fewer people plus better AI equals faster results.

If this hypothesis holds, it changes hiring completely. As I wrote about in how AI killed the traditional developer hire, instead of looking for people who can do a specific job, companies will look for people who can manage systems that do many jobs. The premium skill becomes orchestration, not execution. Building the right enterprise AI stack is what makes this orchestration possible.

The Leverage Gap

The future manager orchestrates a network of AI agents, directing work across parallel streams.

There's an equity dimension here that doesn't get enough attention. If AI agents multiply individual output by 10x or 50x, the people who learn to use them well will pull dramatically ahead of those who don't.

This isn't just about income. It's about career velocity, job security, and professional relevance. A junior analyst who can run five agents and produce the output of a senior team becomes incredibly valuable. A senior analyst who refuses to work with agents becomes increasingly expensive for what they deliver.

Recent research from Harvard's Digital Data Design Institute, studying workers at Procter & Gamble and Boston Consulting Group, found that performers on the lower half of the skill spectrum saw 43% performance gains when equipped with AI, while top-half performers saw a 17% boost. The researchers also found that AI leads to more homogenized results, meaning "humans have more diverse ideas, and people who use AI tend to produce more similar ideas." The workers who improved most were the ones who figured out the boundaries of when to lean on AI and when to lean on human creativity.

This suggests the future workforce won't split neatly into "AI users" and "non-AI users." It'll split into people who understand how to direct AI effectively and people who don't. And that split will matter more than almost any traditional credential.

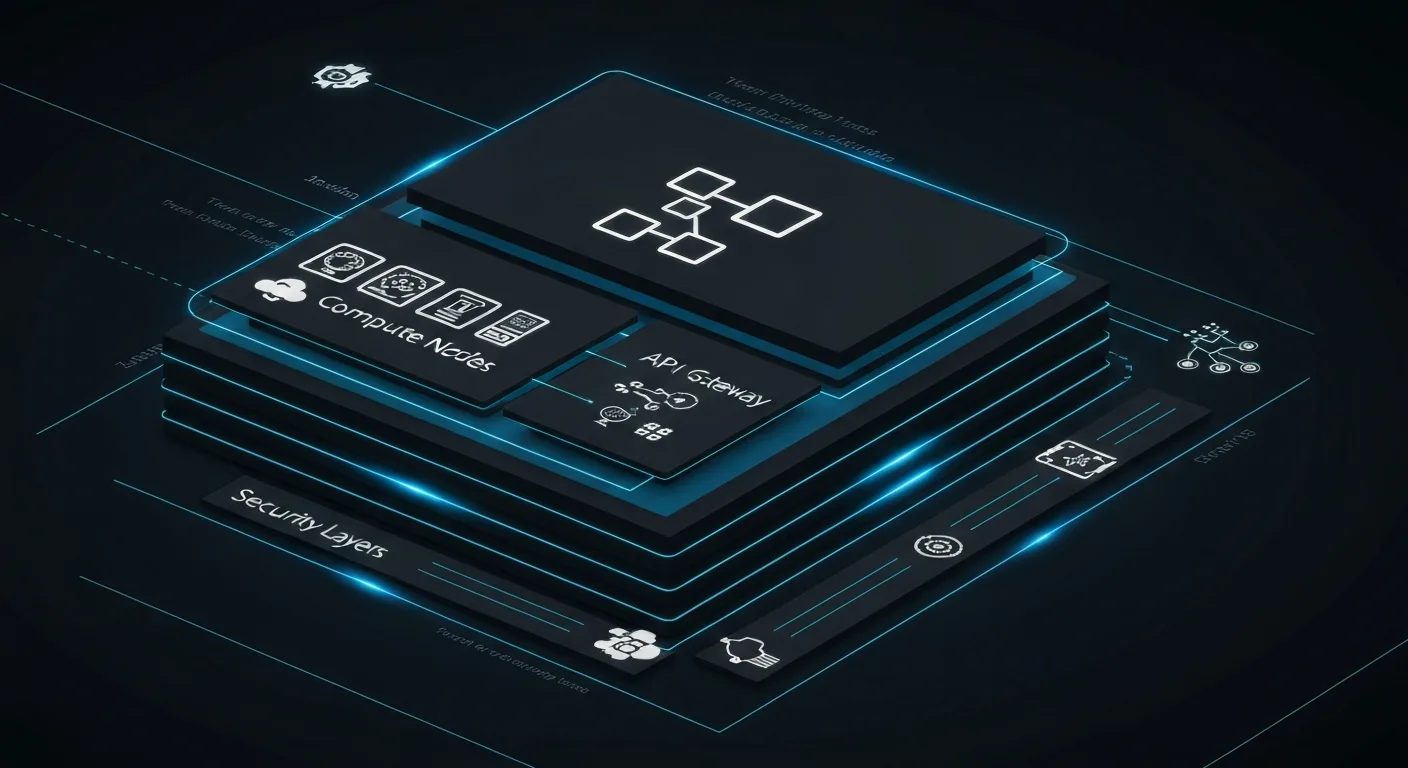

The Infrastructure Reality

Here's what most articles about AI agents leave out: the operational reality of actually running them at scale. The demos look clean. The case studies sound effortless. But when you go to deploy a multi-agent workflow in a real company with real data, real security requirements, and real integration points, you run into costs and complexity that nobody put in the brochure.

Start with compute. Running a single sophisticated agent for a few hours might cost you a few dollars in API fees. That's manageable. But when you're running 20 agents in parallel, 8 hours a night, processing large document sets or complex codebases, costs stack up fast. Anthropic's Claude API, OpenAI's GPT-4o, and Google's Gemini Pro all price by token. A single overnight research run that ingests hundreds of documents and generates detailed outputs can burn through $50 to $200 in API costs depending on context size and model choice. Multiply that across departments and it's a real budget line item, not a rounding error.

Then there's the platform question. You have choices: build your own agent orchestration layer using frameworks like LangChain, LlamaIndex, or Microsoft's AutoGen. Or you pay for managed platforms like Relevance AI, Zapier Central, Lindy, or enterprise offerings from Salesforce and Microsoft that bundle agents into existing software. Building gives you control but requires serious engineering. Buying gives you speed but creates vendor dependency and often limits what your agents can actually do.

Monitoring is where most early deployments fall apart. Agents make mistakes. They hallucinate. They misinterpret ambiguous instructions. They get stuck in loops. Without visibility into what your agents are actually doing at every step, you won't catch those errors until they've propagated into real decisions. This is why logging, tracing, and evaluation pipelines aren't optional add-ons. They're the job. Tools like LangSmith, Arize, and Weights & Biases are building out observability for AI agents, but this space is still young. Most companies deploying agents today are building more of their own monitoring than they expected to.

Security is the other conversation nobody wants to have until something goes wrong. Agents that have access to your email, your CRM, your financial systems, and your code repositories are high-value targets. Prompt injection attacks (where malicious content in external documents hijacks an agent's behavior) are a real and underappreciated risk. Giving agents the minimum permissions they need to do their specific job, logging every action they take, and building human approval checkpoints into high-stakes decisions are not best practices. They're table stakes.

The honest cost model for a mid-market company running a meaningful agent deployment looks something like this: $2,000 to $10,000 per month in API and platform costs, one or two internal people spending 20% to 40% of their time on agent operations and maintenance, and an upfront investment of $50,000 to $150,000 in workflow design, integration, and testing before any of it runs reliably. That's not prohibitive. But it's not $100 a month, either. The math still works if you're replacing or augmenting work that was costing you $500,000 a year in headcount. It's just a more serious investment than the pitch decks suggest.

Industries Already Transformed

Enough with the hypotheticals. Let's look at where agents are already doing real work at scale.

Financial Services and Wealth Management

This is the sector moving fastest, partly because the compliance-heavy, document-intensive nature of the work is exactly where agents shine. Morgan Stanley rolled out an AI assistant built on GPT-4 to its 16,000 financial advisors in 2023. By early 2025, advisors were using it to pull client data, generate meeting prep summaries, and draft follow-up notes in a fraction of the time it used to take. The bank reported that advisors using the tool were spending significantly more time in client conversations and less time on administrative work. JPMorgan's COiN (Contract Intelligence) platform has been analyzing legal documents since 2017 and now handles work that previously required 360,000 hours of lawyer time annually. The pattern is consistent: agents handle the documentation, data extraction, and synthesis. Humans handle the judgment and the relationship.

Software Development

GitHub Copilot crossed one million paid subscribers in 2023 and reported that developers using it completed tasks 55% faster in controlled studies. But individual code completion is just the starting point. By 2025, agentic coding tools like Cursor, Devin, and SWE-Agent were handling full feature development cycles: reading a spec, writing code across multiple files, running tests, fixing errors, and issuing pull requests without human intervention on each step. Cognition AI's Devin, when released in early 2024, solved 13.86% of real-world GitHub issues end-to-end in benchmarks. That number sounds small until you realize the previous best automated approach solved 1.96% of them. The jump was not incremental. Entire categories of junior software engineering work are now contested territory between humans and agents.

Healthcare Administration

Clinical care requires judgment that agents aren't ready for. Administrative healthcare work is a different story. Prior authorization, billing, coding, appointment scheduling, and clinical documentation are some of the most expensive and error-prone work in the US healthcare system. Nuance's Dragon Ambient eXperience (DAX) is an AI tool now used by over 550 healthcare organizations to transcribe and summarize clinical conversations in real time, cutting the documentation burden that causes physician burnout. Abridge, backed by UPMC and a16z, does similar work with a focus on accuracy and summarization at the point of care. On the administrative side, Waystar and Availity are deploying agents to handle claims scrubbing, denial management, and payer communication. The administrative cost of US healthcare is estimated at over $800 billion annually. That's a target agents will keep hitting.

Legal Services

Harvey AI, backed by OpenAI, was processing legal documents for Allen & Overy (now A&O Shearman) within a year of its founding. The firm gave Harvey access to its full knowledge base and started using it for contract analysis, due diligence, and regulatory research. By 2024, the tool was in use at dozens of Am Law 100 firms. Casetext's CoCounsel, acquired by Thomson Reuters for $650 million in 2023, offered a similar playbook: agents that could review documents, research case law, and draft legal memos in hours rather than days. The billable hour model that has governed legal services for a century is under direct pressure. When an agent can do in two hours what previously took a junior associate two days, the only question is how law firms restructure their economics before clients force them to.

How to Start: A Practical Guide for Mid-Market Companies

Always-on automation means the work never stops, but humans need boundaries to stay effective.

I talk to a lot of mid-market operators who see what's happening and feel stuck between two bad options: bet big on AI and risk disrupting something that works, or wait and fall behind. The right path is neither. Here's how I'd approach it if I were running a $50M to $500M company today.

Step 1: Audit your high-volume, rule-based work first.

Agents are best at tasks that are high frequency, rule-based, and well-documented. Invoice processing, sales outreach sequences, support ticket triage, contract review for standard clauses, market monitoring, report generation. Make a list of every task your team does more than 20 times a week. Those are your candidates. Start there, not with the complex judgment-heavy work.

Step 2: Pick one workflow and actually finish it.

The biggest mistake I see companies make is running five pilots simultaneously and finishing none of them. Pick the single workflow with the highest volume and the clearest success metric. Build an agent for that one thing. Measure it. Fix what's broken. Then move to the next one. Breadth before depth is a recipe for expensive, inconclusive experiments.

Step 3: Choose a platform that matches your technical capacity.

If you have a strong engineering team, explore LangChain or AutoGen for custom orchestration. If you don't, start with a managed platform like Relevance AI, Make.com with AI steps, or Zapier's AI features. The goal in the first 90 days is to ship something that works, not to build the perfect architecture. You'll learn more from a working imperfect agent than from six months of planning the ideal one.

Step 4: Assign a human owner to every agent workflow.

Agents don't manage themselves. Every agent workflow needs a human who owns the output quality, monitors for errors, and has authority to pause or override. This doesn't have to be a full-time job. But it has to be someone's actual responsibility, not something that "the IT team will handle." If nobody owns it, nobody will catch the errors before they matter.

Step 5: Build your evaluation layer before you scale.

Before you go from one agent to ten, build the logging and review process that lets you check output quality systematically. This means spot-checking a percentage of agent outputs daily, setting up alerts for error patterns, and running a weekly review of what the agents did and whether it was correct. This feels like overhead when you're small. It becomes survival when you scale.

Step 6: Be honest with your team about what's changing.

The fastest way to create resistance to AI adoption is to let people find out about it through rumors. Be direct. Explain what you're automating, why, and what it means for their roles. In most mid-market companies, the goal isn't to eliminate jobs. It's to stop hiring for roles that agents can handle and redeploy existing people toward higher-value work. People can handle that conversation. What they can't handle is being blindsided.

The companies I've seen get the most out of AI agents in the first year are the ones that treat it as an operational discipline, not a technology project. The technology is the easy part. Changing how people work, what they're responsible for, and how they measure success is the hard part. That's a leadership challenge, not an engineering one. For companies that need senior AI guidance without committing to a full-time executive hire, a fractional Chief AI Officer can bridge that gap and set the strategic direction from day one. It's why more companies are bringing in a fractional Chief AI Officer to lead the transition.

What Tomorrow's Worker Looks Like

If these trends continue, the worker of 2028 or 2030 probably looks something like this.

They manage a portfolio of projects, not just one job. They spend their mornings reviewing what their agents produced overnight. They make judgment calls about quality, direction, and priorities. They step in for the work that requires genuine human insight, creativity, or relationship-building. And they spend a fair amount of time just thinking, because the bottleneck shifts from "can we get the work done" to "are we doing the right work."

The management layer above them is thinner. Fewer people are needed to coordinate when agents handle the coordination. But the people who remain in management roles are more strategic, more technical, and more accountable for bigger outcomes.

The workday itself might look different too. If agents handle execution around the clock, the human work becomes more about high-quality decision-making in shorter bursts rather than grinding through long hours. A four-day workweek might not just be a perk. It might be the logical structure when most of the repetitive work runs without you.

That's the optimistic version. The less optimistic version is that companies use agents to demand more output from fewer people, everyone burns out trying to keep up, and the gains flow mostly to shareholders. Which version we get depends less on the technology and more on the choices companies and policymakers make in the next few years.

The Only Certainty

Nobody knows exactly how this plays out. Anyone who tells you they do is selling something. But the direction is unmistakable. AI agents are always on. They're getting better fast. And they're changing the math on how many people it takes to get things done.

The workers who thrive will be the ones who learn to work alongside agents, not compete with them. The managers who thrive will be the ones who learn to orchestrate systems, not just lead people. And the companies that thrive will be the ones that figure out this new ratio of humans to AI before their competitors do.

The shift is already underway. The only question is how fast you adapt to it.

Frequently Asked Questions

AI agents are autonomous software systems that can plan, execute, and self-correct multi-step tasks without continuous human oversight. Unlike chatbots, agents use tools, make decisions, and operate over extended periods.

Traditional automation follows rigid scripts. AI agents adapt to context, handle exceptions, and improve over time. They can reason about novel situations rather than just following predefined rules.

For mid-market companies, a production agent deployment typically costs $500-2,000 per month in inference costs, plus $5,000-15,000 in initial setup. Most deployments show positive ROI within 60 days.

Agents are best deployed alongside employees, not as replacements. The highest ROI comes from automating the repetitive 60-70% of a role so humans can focus on judgment, relationships, and creative work.

Managing AI agents requires a different skill set than managing people. The core competencies are systems thinking, prompt engineering, quality evaluation, and resource orchestration. The day-to-day work shifts from coaching individuals to monitoring pipelines, debugging agent behavior, and making judgment calls about where human intervention adds the most value.

Most companies that follow a focused rollout see measurable results within 30 to 90 days of deploying their first production agent workflow. The key variable is how well-defined the target workflow is before you start. Companies that pick a high-volume, rule-based process with clear metrics typically demonstrate ROI within the first month. Companies that deploy agents against vaguely defined efficiency goals often spend three to six months tuning before they can show concrete numbers. Start with a single workflow and measure the before-and-after rigorously.

This is the question that should come up first in every deployment conversation but usually comes up last. AI agents that connect to your email, CRM, financial systems, or codebase are handling sensitive data by default. Best practices start with the principle of least privilege: give each agent access only to the specific systems and data it needs for its task, nothing more. Log every action an agent takes so you have an audit trail. Build human approval checkpoints into any workflow that involves sending external communications, modifying financial records, or accessing customer PII. Use enterprise-grade API providers that offer data processing agreements and do not train on your inputs. Review prompt injection risks, especially for agents that process external documents or emails, since malicious content in those inputs can hijack agent behavior. And treat your agent credentials the same way you treat admin passwords: rotate them, scope them tightly, and monitor for misuse.